Catching up on previous seasons/episodes of the “Black Folk Don’t…” web series that have been featured here on S&A, this one in particular – “Black Folk Don’t… Travel” – inspired a question in me. First watch it below, and continue reading after the video.

So… it got me thinking about the many films covered here on S&A over the years that feature, most often, a white man or woman (usually American or from continental Europe) in an African country; We’ve seen quite a number of films about white Americans or white Europeans either already living in Africa, or visiting some African country, in search of something or someone – whether it’s salvation, redemption, inspiration, vacation, themselves, their spouses, children, friends, their dogs, cats, apes, whatever; and it’s rare that they’re villains, nor in positions of inferiority.

Also, those that are historically based usually involve white *settlers* (or remnants of colonialism) who come to see themselves as native to the land that their ancestors once occupied.

And in thinking further about this, I realized that I can’t come up with many titles of fictional narrative feature films that center on stories about specifically African Americans in Africa. It’s not like black Americans don’t travel right? Or more specifically, it’s not like black Americans don’t travel to/visit/live/work in African countries right? I know more than a few.

Their reality just isn’t reflected on our screens, big and small, as has long been the case for much of the so-called black experience, so nothing terribly shocking. But I’m just making an observation. I’m speaking in the spirit of what we call Pan Africanism.

If Hollywood movies are any indication, one would think that white Americans and Europeans were the only group of people who traveled internationally; and these movies often feature lead characters who do some especially ignorant, insulting, cringe-worthy things (whether intentionally or not, on the part of the filmmakers).

So there I was wondering… it would be refreshing to see more films about African Americans outside the USA, specifically in Africa (although let’s face it, it’ll be just as refreshing to see a wider variety of films about African Americans in America to begin with). But I can’t think of many films with that (African Americans in Africa) as a basis for the story.

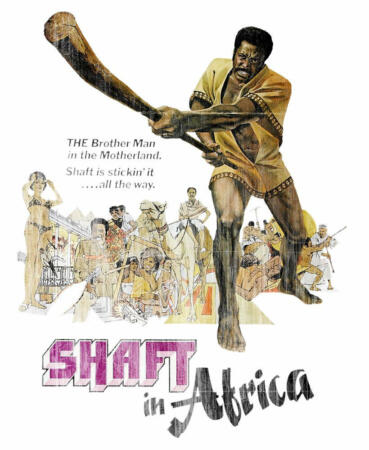

Does “Shaft In Africa” (photo above) count? Haile Gerima’s “Sankofa” is another. And to be clear, I don’t mean films that star African Americans playing Africans (there are certainly numerous examples of those); nor am I including documentaries. I’m thinking of narrative fiction feature films with stories centered on black Americans either visiting a country (or several countries) in Africa for whatever reason, or who are already living in an African country.

Can you name any? Maybe I’m suffering from some form of temporary amnesia, and just can’t remember any films that fit the criteria. Preferably films made in the last 20 years. But if we have to go even further back, then so be it.

Discussions abound about unifying the Diaspora; it’s not quite happening in real life from where I’m standing (see recent debates about black American versus black British actors for example); but at least, in the fantasy, make-believe world of the cinema, we can pretend, or show what could (or could not) be, right?

Black folks travel (internationally… to African countries) don’t they?